AuthorLeila Wu

DateFeb 5, 2026

Reading time5 min read

Synthetic Research

The gap between customers and brands is widening. According to reporting from Gartner, 84% of CMOs report high levels of strategic dysfunction; 61% say their plans are driven by operational demands over customer-centric strategy. Slow research exacerbates this inability to adapt to customer needs and shifts.

Meanwhile, AI is advancing at a speed greater than we imagined. Every year we raise the benchmarks to test the limits of AI algorithms and they are advancing so quickly that they’re consistently outpacing the tools used to measure them.

The prudent strategy is to evolve with AI, not wait for it. Hence human and machine collaboration.

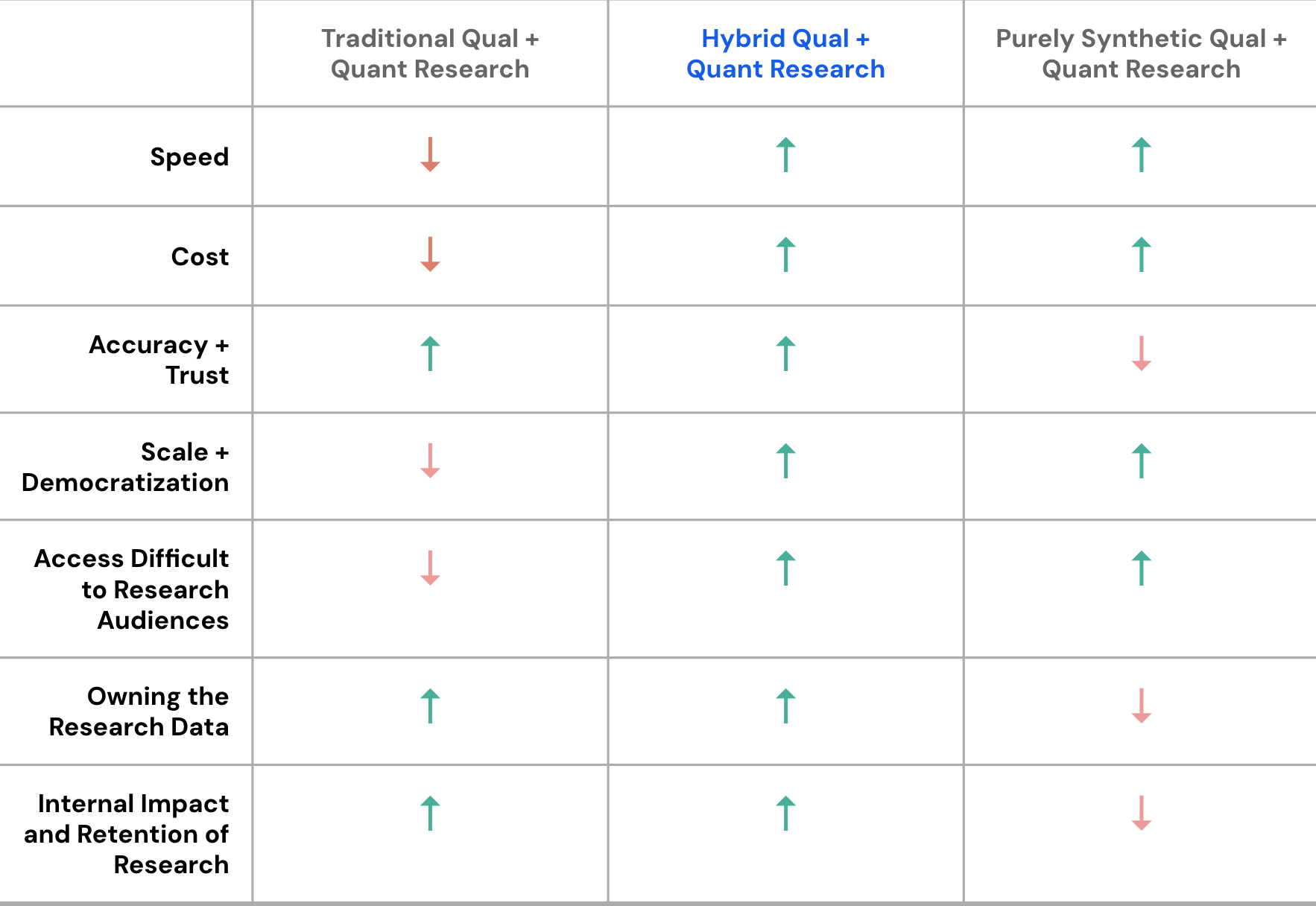

We've spent years studying how brands build understanding of their customers, and we've watched that process get slower, more expensive, and more disconnected from the speed at which decisions actually get made. We’ve also kept a close eye on the emerging landscape of synthetic research and using AI personas for research and found a consistent pattern: many see synthetic research as a fast replacement for traditional research. Academic evidence says otherwise. Studies are confirming that LLM-based synthetic respondents consistently fail on demographic subgroups and sensitive attributes when used in isolation, introducing systematic biases that aren't obvious without direct comparison to real data.

The consensus is clear: synthetic works best when paired with human judgment and insight. That finding is the foundation of everything we've built.

As the synthetic research space develops, many new tools optimize for speed, sometimes with thin governance. Research firms offer rigorous studies that take months and can slow down decision-making. We've deliberately built in the whitespace between them — a governance-first, human-led hybrid that makes transparency a feature, not a footnote. That means publishing validation protocols, acknowledging model limits, and treating divergence between synthetic and human findings as a diagnostic signal rather than an error to hide.

Our hybrid approach also lets human and machine each do what they do best. AI can effectively speed up human processes but won't and shouldn't replace human moderation, feedback, and insight. While humans manage error, bias, and model drift proactively, and let us make more confident decisions in the output rather than a black-box.

Where AI Leads

- Synthesizing

- Scaling qual into quant

- Democratizing tests

- Reaching niche audiences

- Freeing up time

Where humans lead

- Human-to-Human interaction

- Collaboration and context

- Feedback and interaction with creative and in-house teams

- Strategy vs. outputs

- Moderating, interpreting, and owning the insight

The outcome of research isn’t a document, it’s sustained, shared understanding. We want to put the power of research in everyone’s hands so teams can explore ideas faster, make evidence-based decisions, and embed the customer into everyday work.

A flexible, scalable system that keeps getting smarter.

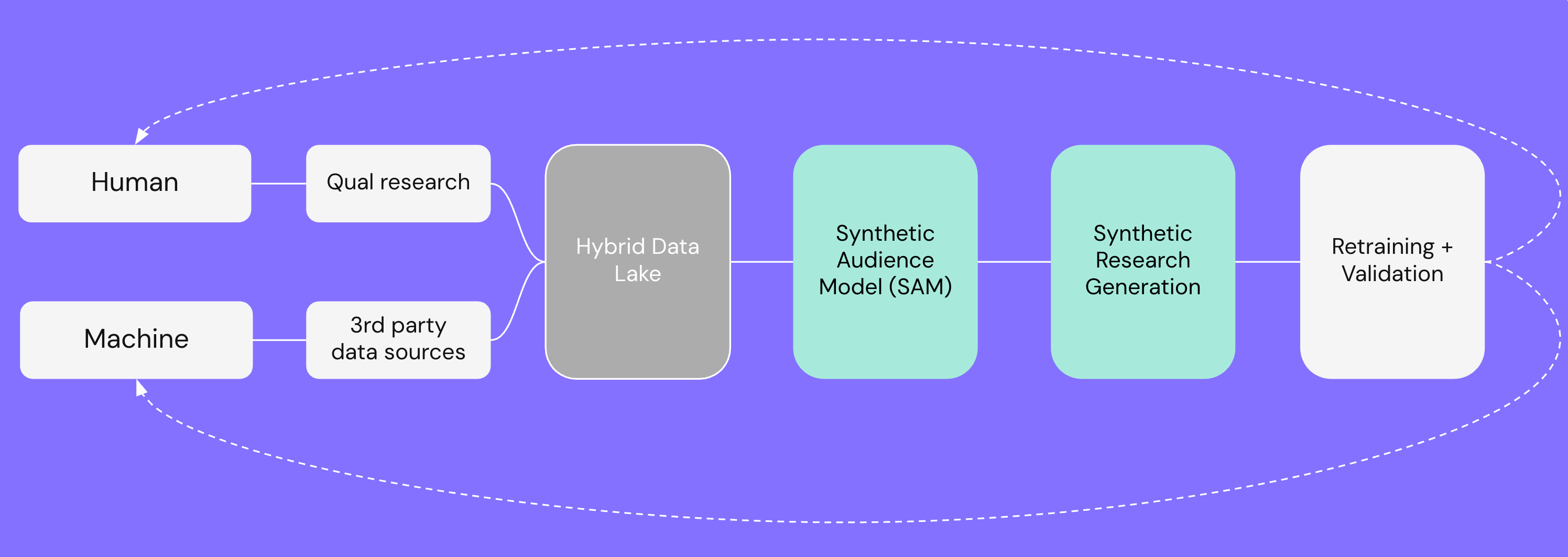

We are building a continual learning loop that compounds, where we maximize the strengths of humans and AI by being flexible, scalable, and adaptable.

Our approach starts with conversations with people.

- Best way for teams to empathize with real customers.

- Planned or “pop-up” moments reduce cost and build owned panels.

- Close collaboration with in-house and creative for nuanced, iterative questions.

- Each interview also validates synthetic research (feedback loop).

We build a privacy-safe knowledge base in a hybrid data lake.

- Secure space to combine qual interviews, third party, and observational data.

- Continuously curated to reflect market shifts & mindset changes.

- Enables targeted future qual and richer synthetic modeling.

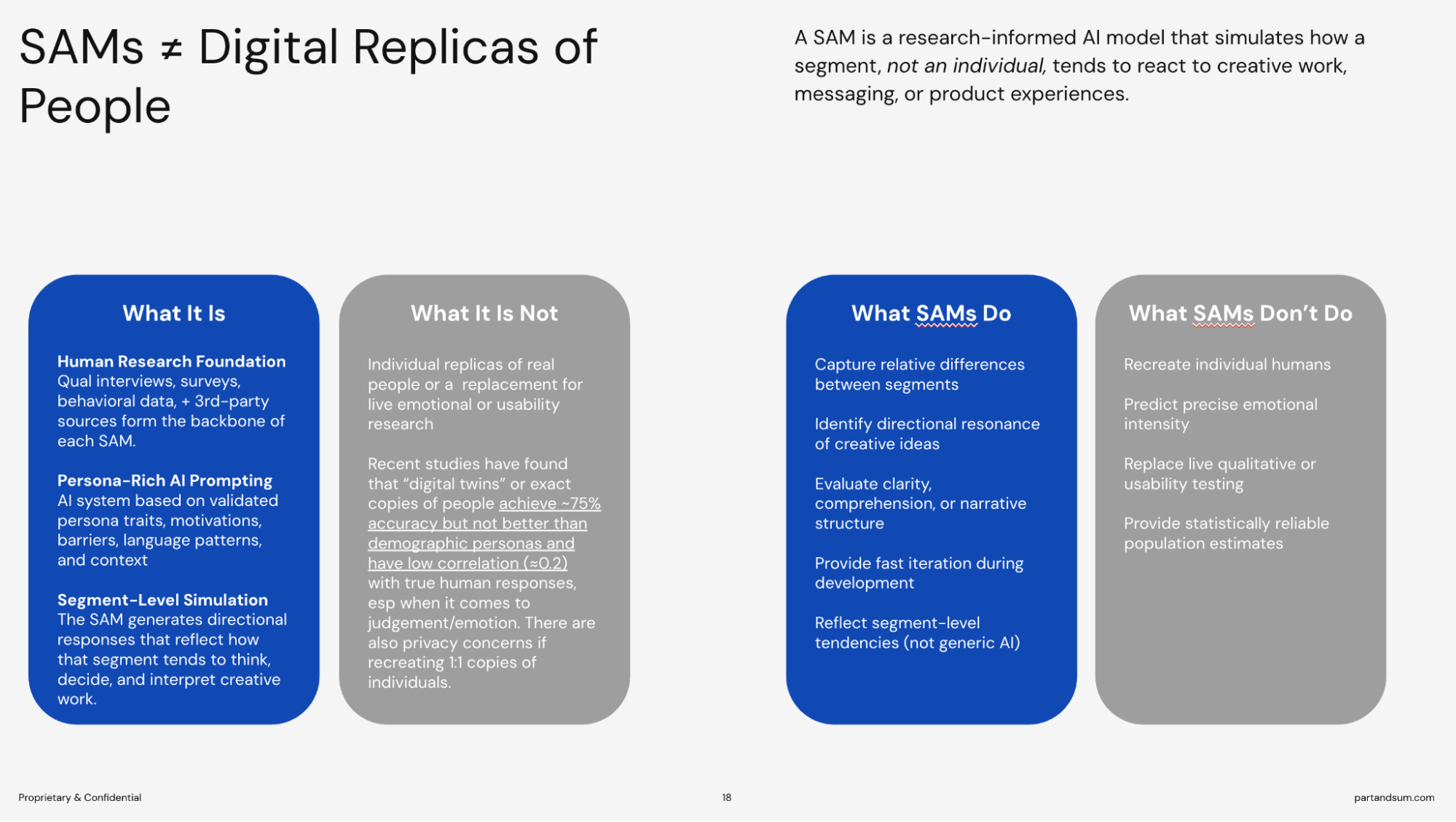

We then generate Synthetic Audience Models (SAMs). SAMs are conversational interfaces, built in a moderated AI environment, that teams can interact with directly, guided by trained Part and Sum strategists using structured prompt methodologies.

- Self-serve for sales/creative to test collateral and concepts.

- Synthetic research across segments, roles, and seniority.

- Designed to cite primary research, acknowledge limits, and include safeguards.

- The AI copies are then surveyed at scale to quantify patterns as needed.

- Findings are synthesized at the pattern level, never treated as standalone data points, and explicitly mapped against human research to surface areas of alignment, divergence, and high-confidence signal.

In this new AI world, the services and solutions are being built as we speak. We're not waiting on the sidelines; we're in it, testing and learning alongside our clients, and building the future of human-led synthetic research together.We know that our offerings will continue to evolve as we test and learn and optimize our vision for human-led synthetic research.

To learn more, book a meeting with us here.

Related Insights

If you liked thisyou'll love 😍 these too